Data Center

Challenges

The data center industry is continuously challenged with staying up to and sometimes ahead of the ever-changing technological advancements as well as the growing customer demands. It’s getting more and more difficult for data center engineers and managers to ensure higher uptimes, handle costs, and deploy quickly at the same time. Between idle physical space, capacity, power, cooling, and all the resources used in a data center it’s becoming a challenge to stay ahead of the curve.

More often than not, data center managers tend to over-provision so as to avoid downtimes. This results in wastage of resources and space while also causing power and energy wastage. The data center sector is estimated to account for 1.4% of the global electricity consumption. The data center industry is often troubled by its massive energy consumption and rising temperature problem. Typically, more energy is found to be wasted than used at a data center. With the increase in data, the capacity of a data center is always an unsolved question that needs to be addressed.

Data center processing has now reached an inflection point; the heat being generated by chips and servers is so high that the cooling methods most data centers employ are no longer completely viable. Combined, the demand for video content streaming, application services and data-intense technologies like artificial intelligence (AI), IoT and 5G is growing rapidly. High performance computing and cryptocurrency mining often require cooling capabilities beyond what air and other traditional cooling methods are capable of.

If we’re going to take infrastructure into the next century, everyone building, operating, and utilizing data centers need to focus on making improvements that support the new infrastructure equation. Broader accessibility, ease of deployment, scalability, affordability – all of these factors need to be present for the kind of agile, resilient, high-performance computing needed to support digital transformation today and sustainable growth for decades to come.

Game-Changing Technology Solution

Our advanced approach to immersion cooling modular data centers allows for expedited infrastructure design and development with leading-edge immersion cooling technology as a turn-key solution. Building a customized modular-immersion cooling data center with our strategic partner provides our customers with a full team of project management and integration specialists combined with the latest network technology in data center development. Each facility features efficient modular design and immersion cooling technology that significantly reduces capital and operational expenses, improves performance and hardware reliability, and has the ability to operate in confined spaces or extreme environments – all with a speedy time to market.

Immersion cooling is attracting clients away from traditional data centers, as a logical transition, with significant advantages for both CPU and GPU processing power. Advantages include higher efficiency and energy savings, improved computer performance and hardware reliability, including lower maintenance requirements. To add even more to the value proposition, our modular data centers designs are less complicated because they eliminate the need for complex airflow management that reduces the footprint size to up to 90%. Average time to market is 67% times faster than the traditional data center.

What is Two-Phase Immersion Cooling?

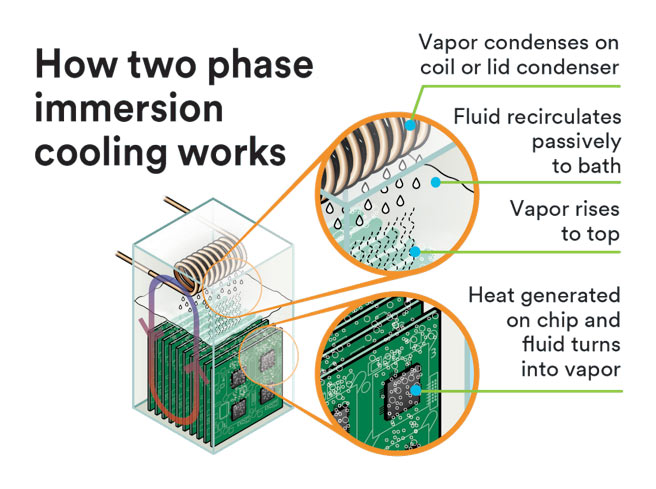

This groundbreaking technology immerses servers and other IT equipment in a non-conductive fluid that has excellent thermal characteristics, providing thousands of times more heat rejection than air cooling. The server components (like CPUs, GPUs, ASICs and power supplies) heat the fluid until it is boiled into a vapor. The heat energy in the vapor is then transferred through a condensing coil placed just above the ‘vapor zone’, ejecting that heat to an outside fluid loop typically connected to a fluid cooler (also known as a dry cooler since no water is consumed to eject the heat). The condensed vapor falls back into the tank in the form of a liquid, hence completing a perpetual, self-contained, 2-phase cooling cycle: Liquid – Gas – Liquid.

The fluids we will use in our two-phase immersion cooling tanks are manufactured by 3M and are not classified as flammable and are non-oil-based, low in toxicity, non-corrosive and have good material compatibility and thermal stability. These fluids have a low global warming potential (GWP) and also a zero-ozone depletion potential (ODP).

Major Advantages of Two-Phase Immersion Cooling

Data centers using two-phase immersion cooling have numerous advantages over traditional air-cooled data centers. From reduced CAPEX and OPEX to improved efficiencies, improved hardware reliability, improved performance, and higher density, two-phase immersion cooling is the answer that data centers have been looking for. Some of the major advantages of this revolutionary technology are:

Largest Factor Driving the need for Two-Phase Immersion Cooling

There are many factors driving the need for this revolutionary technology:

1) Data center efficiency gains have stalled since 2018

2) Chip power / IT power densities are increasing rapidly

3) Data center water use has surpassed energy use as an environmental concern

4) Compute power is moving toward the edge and compacting

5) Data center e-waste is a growing problem

6) Billions are being invested in corporate sustainability initiatives

The largest single factor and the one that must be addressed is the fact that Chip power / IT power densities are increasing rapidly. While Moore’s Law long ago established that processor speed would double every eighteen months, the speed of AI processing now doubles every three and a half months. Handling these speeds requires the most powerful chips ever designed, and these chips generate massive amounts of heat, which cannot be effectively or efficiently cooled with air.

For example, in April 2021 Cerebras released its new WSE 2 chip, which boasts 2.6 trillion transistors and 850,000 AI-optimized cores and draws 23 kW of power. Most air-cooling systems in data centers can only handle about 8kW to 12kW per rack, so even though you could fit three WSE 2 chips in a rack, you might not be able to blow enough air through the rack to cool even one of them. Even if you miraculously achieved an air-cooling solution, with AI power doubling every quarter, this approach still wouldn’t be sustainable for long.

The GPU “Heat Wave” is Here to Stay

High performance computing (HPC) and data-intensive technology applications like machine learning, artificial intelligence, crypto mining, and high-definition video processing use servers and chips that produce way too much for heat for air cooling to be practical or efficient.

High performance computing (HPC) and data-intensive technology applications like machine learning, artificial intelligence, crypto mining, and high-definition video processing use servers and chips that produce way too much for heat for air cooling to be practical or efficient.

Next-gen, GPU-accelerated apps are opening up tremendous possibilities across many industry segments such as financial modeling, cutting edge scientific research, and oil and gas exploration. In fact, according to Forbes, 10 of the top 10 consumer Internet companies, 10 of the top 10 automakers, and nine of the top 10 leading hospitals have already adopted GPU technologies. Most experts would agree that this trend will quicken over the coming months and years.

According to Dr. Satoshi Matsuoka, Tokyo Institute of Technology, “Within five years…ten years on the outside, there will be no alternative to immersion cooling.” Immersion is ideal for cooling GPU-accelerated servers because it can handle up to 100 kW/rack – almost 10X that of some air-cooled racks – this means immersion cooled racks can be fully loaded with GPU-based servers.

Game-Changer

Immersion cooling offers many benefits compared to traditional air cooling, including increased thermal efficiency (i.e., lower PUE), performance and reliability of data centers. Immersion cooling also eliminates the need for complex airflow management systems. Optimized immersion-cooled data centers can lead to reductions in capital and operating expenses, as well as a reduction in construction time and complexity. The increased compute density from immersion cooling allows for more flexible data center layouts and removes barriers to data center location choices such as areas with high real estate costs or space limitations. Finally, two-phase immersion cooling can help eliminate the tradeoff between water usage, energy efficiency and cost by eliminating the need for chillers with economizers and complex controls used in air cooling. This helps eliminate the use of water needed to cool a data center.

Between all the advantages and all the factors driving this new technology, modular data centers equipped with two-phase immersion cooling is a tailored made solution to the outdated air-cooled data centers that are unfortunately adversely impacting our environment. Add a green energy source like nuclear or solar power to the mix and the future of the new data center using our two-phase immersion cooling solution is a definite game-changer for all.

For more information, please contact us at info@bcrsinc.com

According to NREL, studies show a wide range of PUE values for data centers, but the overall average tends to be around 1.8. Data centers focusing on efficiency typically achieve PUE (power usage effectiveness) values of 1.2 or less. PUE is the ratio of the total amount of power used by a computer data center facility to the power delivered to computing equipment. Measurements of efficiency such as PUE helps data center owners/operators gauge their overall operations; as well identify opportunities to increase efficiency.

According to NREL, studies show a wide range of PUE values for data centers, but the overall average tends to be around 1.8. Data centers focusing on efficiency typically achieve PUE (power usage effectiveness) values of 1.2 or less. PUE is the ratio of the total amount of power used by a computer data center facility to the power delivered to computing equipment. Measurements of efficiency such as PUE helps data center owners/operators gauge their overall operations; as well identify opportunities to increase efficiency.

The modular buildings are executed as a complete engineering, procurement and construction (EPC) model, including project management, scheduling and procurement to ensure delivery targets. Unlike traditional air-cooled traditional centers taking anywhere from 12 to 18 months to build, these modular centers can be built in 5 to 7 months, which is 60% faster. Modular data center facilities allow for expedited infrastructure design and development of Edge and campus site expansion solutions with CAPEX and OPEX certainty.

The modular buildings are executed as a complete engineering, procurement and construction (EPC) model, including project management, scheduling and procurement to ensure delivery targets. Unlike traditional air-cooled traditional centers taking anywhere from 12 to 18 months to build, these modular centers can be built in 5 to 7 months, which is 60% faster. Modular data center facilities allow for expedited infrastructure design and development of Edge and campus site expansion solutions with CAPEX and OPEX certainty. Two-phase immersion cooling does not require the HVAC requirements of a traditional air-cooled data center. In fact, the modular design reduces HVAC by 90% thus reducing the total operating expense for electricity by 50%.

Two-phase immersion cooling does not require the HVAC requirements of a traditional air-cooled data center. In fact, the modular design reduces HVAC by 90% thus reducing the total operating expense for electricity by 50%. The modular data centers for two-phase immersion cooling requires no hot/cold aisles, computer room air conditioning units (CRAC’s) or raised floors. Additionally, servers require no fans. Due to the superior efficiency of Immersion Cooling it is possible to greatly reduce the size of transformers, generators, transfer switches, PDU’s and UPS’. By greatly simplifying the cooling requirements and reducing the size of the required power infrastructure, it is possible to considerably reduce the investment and spacing requirements of a Data Center.

The modular data centers for two-phase immersion cooling requires no hot/cold aisles, computer room air conditioning units (CRAC’s) or raised floors. Additionally, servers require no fans. Due to the superior efficiency of Immersion Cooling it is possible to greatly reduce the size of transformers, generators, transfer switches, PDU’s and UPS’. By greatly simplifying the cooling requirements and reducing the size of the required power infrastructure, it is possible to considerably reduce the investment and spacing requirements of a Data Center. With the spread of cloud computing, energy use by data centers is on an upward trend and society is showing more concern over the environmental performance of data centers. According to Climate Neutral Group, worldwide, it is estimated that data centers consume about 3 percent of the global electric supply and account for about 2 percent of total GHG emissions.

With the spread of cloud computing, energy use by data centers is on an upward trend and society is showing more concern over the environmental performance of data centers. According to Climate Neutral Group, worldwide, it is estimated that data centers consume about 3 percent of the global electric supply and account for about 2 percent of total GHG emissions.